During my time at the startup, I took the initiative to bridge the gap between complex academic research and our commercial offerings. For several months, I focused on identifying and distilling the latest developments in AI, LLMs, and Diffusion models.

My process was driven by technical curiosity and a focus on utility:

- Academic Distillation: I dived into university papers and technical documentation, translating high-level concepts into actionable insights.

- Knowledge as a Product: I compiled these findings into structured reports in Spanish. My work was so rigorous that the startup was able to package and sell these documents as intelligence assets to clients and agencies.

- Practical Validation: I used these insights to run stress tests in Midjourney, moving beyond ‘random prompting’ to investigate how academic theories on visual consistency could be applied to real-world production.

This experience taught me how to quickly synthesize complex information and turn it into a tangible business asset, ensuring that our creative approach was always informed by a deep understanding of the underlying technology.

2022 AI Implementation: Early Commercial Execution

This project marks my first professional experience integrating AI-generated assets into a commercial workflow. Based on an original concept and creative direction by Diego Flores, I was responsible for the technical development and creative execution of the piece.

My role was to translate the foundational idea into a finished film, leveraging the experimental generative tools of 2022 to create and refine the visual assets. I managed the end-to-end production process, including video generation, composition, and editing, ensuring that the AI elements met professional standards. The final sound was crafted by Pedro Bataller (following an initial demo I developed), and the copy was provided by Daniel Fondón. This collaboration serves as a ‘genetic blueprint’ for my current work: taking a visionary concept and engineering the technical systems required to bring it to life.

Visual Consistency Systems: The Midjourney Lab

These images are not isolated prompts or random tests. They are part of a systematic investigation into mastering visual coherence within AI-generated environments, ensuring multiple assets read as frames from the same cohesive project.

My research focused on repeating specific art direction variables—palette, lighting, framing, scale, and atmosphere—iteratively refining prompts until the model transitioned from improvisation to precision. The objective was not to be surprised by the output, but to control it. These tests laid the technical foundation for integrating Midjourney into professional design and production workflows, where visual consistency is a non-negotiable standard, just as critical as the quality of the individual image.

The Style Laboratory: Stress-Testing Model Constraints

The intent of this research was not to build a single, homogeneous project, but to conduct an expansive investigation into art direction, cinematic lighting, character architecture, and diverse visual genres.

This was a Style Laboratory: a space to test different registers, push the boundaries of prompt engineering, and map exactly where the model reached its functional limits. By intentionally ‘stretching’ the prompts to their breaking point, I gained a deep understanding of the model’s technical constraints. This analytical approach allows me to accurately predict which visual challenges are production-ready and which require hybrid VFX intervention to meet professional standards.

Real-World Production: Operationalizing AI (2024–Present)

Since 2024—beginning at Serena and continuing at Jacaranda—these methodologies have matured from experimental research into validated production frameworks. AI has moved beyond the ‘sandbox’ phase to become a core component of my professional design, motion, and post-production pipelines, coexisting seamlessly with traditional craftsmanship and high-level creative direction.

At this stage, my focus is on operational excellence within high-pressure environments:

- Execution under Constraints: Delivering high-fidelity assets within compressed timelines and evolving client requirements.

- Cross-Functional Collaboration: Integrating AI workflows into diverse teams and established studio ecosystems.

- Strategic Control: Managing last-minute changes and ensuring that every deliverable meets the Highest Standards of quality.

The projects showcased here represent AI used with discipline, control, and responsibility. The technology is never the end goal; it is a strategic tool engineered to serve the visual narrative and the client’s objectives.

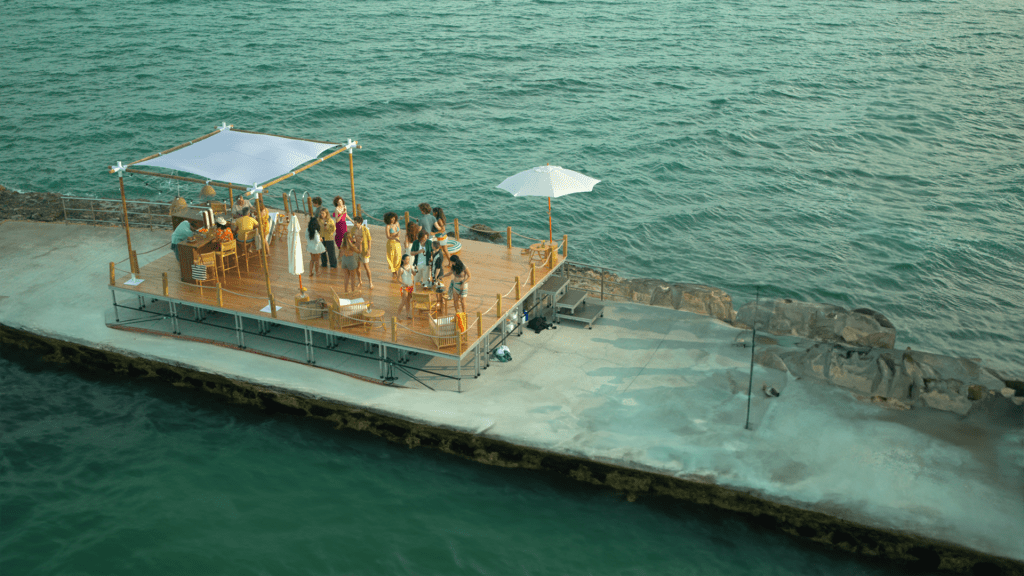

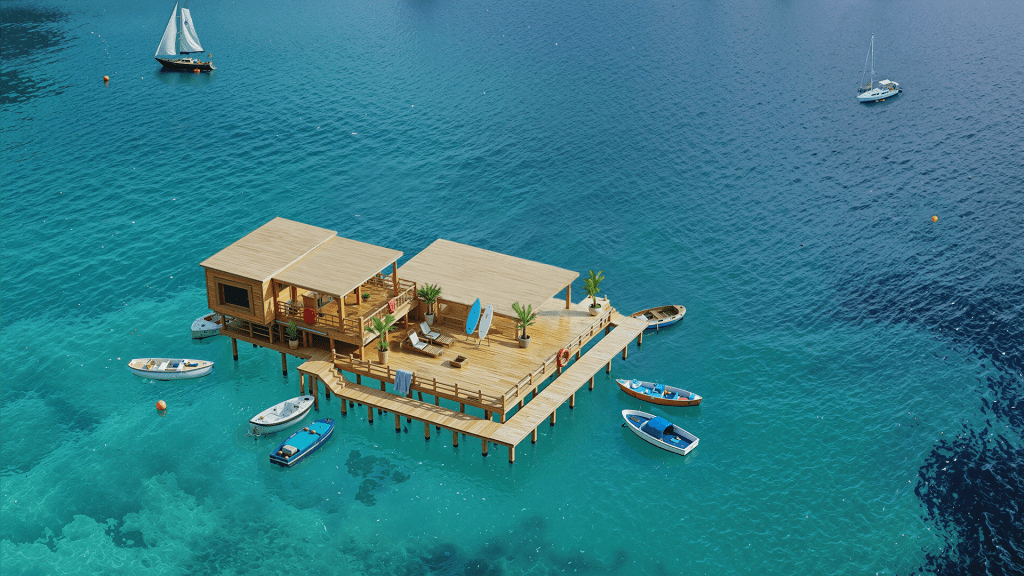

Mahou Concept: Spatial Expansion & Hybrid Environment Design (July 2025)

This project for Mahou is a prime example of solving critical production limitations through an advanced hybrid pipeline. The original footage consisted of a single shot of a small asphalt platform, which needed to be transformed into a massive structure in the middle of the sea, surrounded by water and floating vessels.

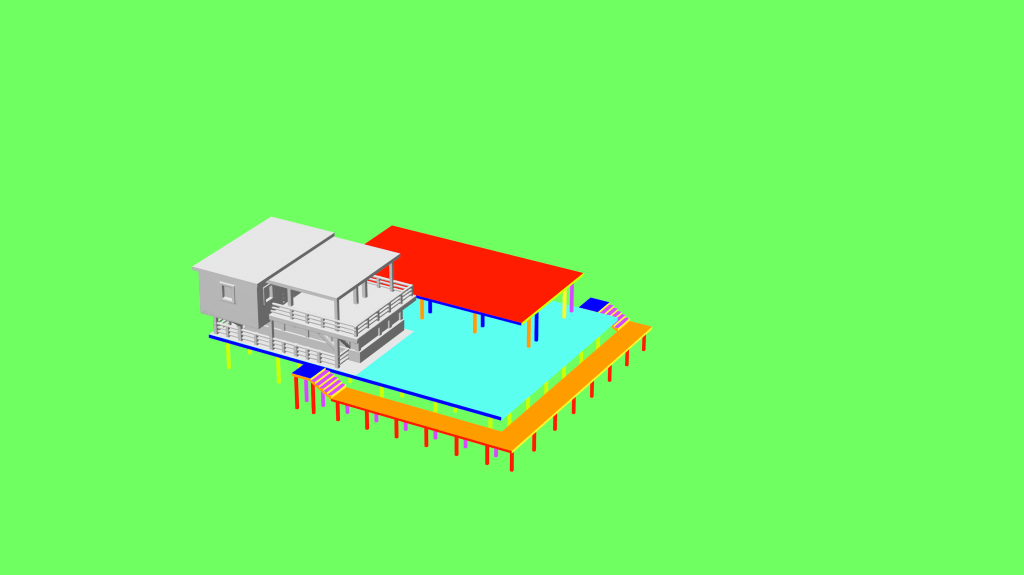

The Technical Solution: To achieve a credible and brand-standard result, I engineered a four-stage workflow:

- Foundation (Cinema4D): I built a 3D geometric ‘blueprint’ to establish perfect perspective and scale, ensuring the new structure would feel physically grounded.

- Generative Construction (Adobe Firefly): I leveraged Firefly for the base structure and environment expansion, specifically choosing it for its superior adherence to geometric depth and blueprints.

- High-Fidelity Integration (Photoshop & Topaz): I meticulously integrated the AI-generated environment with the real footage, using Topaz for texture enhancement and ‘bloom’ effects to ensure seamless visual continuity.

- Temporal Consistency (Firefly Video): I finalized the piece by animating the entire environment, bringing the water and surroundings to life while maintaining the integrity of the original brand asset.

This workflow allowed us to bypass the costs and logistical hurdles of a maritime shoot, delivering a high-impact cinematic result through technical pragmatism and creative orchestration.

Created as a high-concept R&D exploration, ‘Abuelas’ is an audiovisual piece that investigates the delicate friction between machine-driven chance and human-directed control. The project serves as a case study in how generative AI can be integrated into traditional filmmaking without losing the ‘soul’ of the narrative.

The Creative Investigation:

- Hybrid Orchestration: I developed a workflow that meticulously blends AI-generated assets with manual editorial decisions. The focus was on ensuring that the final rhythm, animation, and emotional arc were steered by professional human intuition rather than algorithmic randomness.

- Human-in-the-Loop Methodology: By treating the AI as a ‘generative lens,’ I used manual editing and precise animation timing to reclaim control over the visual output. This ensured that the AI served the storytelling, proving that high-end narrative impact is achieved through intentional direction and creative stewardship.

This piece demonstrates my ability to lead hybrid creative processes, where technology provides the raw material but the final emotional and rhythmic standards are engineered through professional craftsmanship

Patiele: AI-VFX Music Video Pipeline (WIP, Oct 2025)

This project is a technical exploration into integrating Generative AI within a strictly controlled narrative framework. The goal is to move beyond ‘random generation’ and establish a predictable, professional production pipeline that treats AI through the lens of traditional animation logic.

The 10-Step Hybrid Methodology: To maintain absolute aesthetic coherence and manage production costs, I engineered a structured workflow:

- Pre-Production & Logic: From script to a detailed shot breakdown, determining every asset, set, and character needed.

- Creative Control: Establishing art direction benchmarks—pacing, tone, and lighting—before any technical execution.

- Model Engineering (LORAs): I developed several Custom LORAs and character sheets for key characters (including the lead singer, Ama) and objects to ensure identity consistency across hundreds of generated frames.

- Spatial & Character Design: Using a combination of Generative AI and Photoshop to design sets and characters as if they were assets for a traditional animation feature.

- Optimization through Animatics: Before committing to the high costs (time and computing) of video generation, I constructed a full animatic using still frames synced to the music. This allows for rhythm testing and error correction at the lowest possible cost.

- Multi-Model Animation: Leveraging the unique strengths of various Generative AI models to animate specific shots based on the established animatic.

- Final Craft: Traditional post-production and compositing to ensure brand-safe, high-fidelity results.

This project demonstrates my ability to systematize the creative process, proving that emerging technologies are most effective when guided by rigorous planning and traditional filmmaking discipline.